ultralytics 8.0.84 results JSON outputs (#2171)

Co-authored-by: Laughing <61612323+Laughing-q@users.noreply.github.com> Co-authored-by: Yonghye Kwon <developer.0hye@gmail.com> Co-authored-by: Ayush Chaurasia <ayush.chaurarsia@gmail.com> Co-authored-by: pre-commit-ci[bot] <66853113+pre-commit-ci[bot]@users.noreply.github.com> Co-authored-by: Talia Bender <85292283+taliabender@users.noreply.github.com>

This commit is contained in:

82

docs/yolov5/environments/aws_quickstart_tutorial.md

Normal file

82

docs/yolov5/environments/aws_quickstart_tutorial.md

Normal file

@ -0,0 +1,82 @@

|

||||

# YOLOv5 🚀 on AWS Deep Learning Instance: A Comprehensive Guide

|

||||

|

||||

This guide will help new users run YOLOv5 on an Amazon Web Services (AWS) Deep Learning instance. AWS offers a [Free Tier](https://aws.amazon.com/free/) and a [credit program](https://aws.amazon.com/activate/) for a quick and affordable start.

|

||||

|

||||

Other quickstart options for YOLOv5 include our [Colab Notebook](https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb) <a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"></a> <a href="https://www.kaggle.com/ultralytics/yolov5"><img src="https://kaggle.com/static/images/open-in-kaggle.svg" alt="Open In Kaggle"></a>, [GCP Deep Learning VM](https://docs.ultralytics.com/yolov5/environments/google_cloud_quickstart_tutorial), and our Docker image at [Docker Hub](https://hub.docker.com/r/ultralytics/yolov5) <a href="https://hub.docker.com/r/ultralytics/yolov5"><img src="https://img.shields.io/docker/pulls/ultralytics/yolov5?logo=docker" alt="Docker Pulls"></a>. *Updated: 21 April 2023*.

|

||||

|

||||

## 1. AWS Console Sign-in

|

||||

|

||||

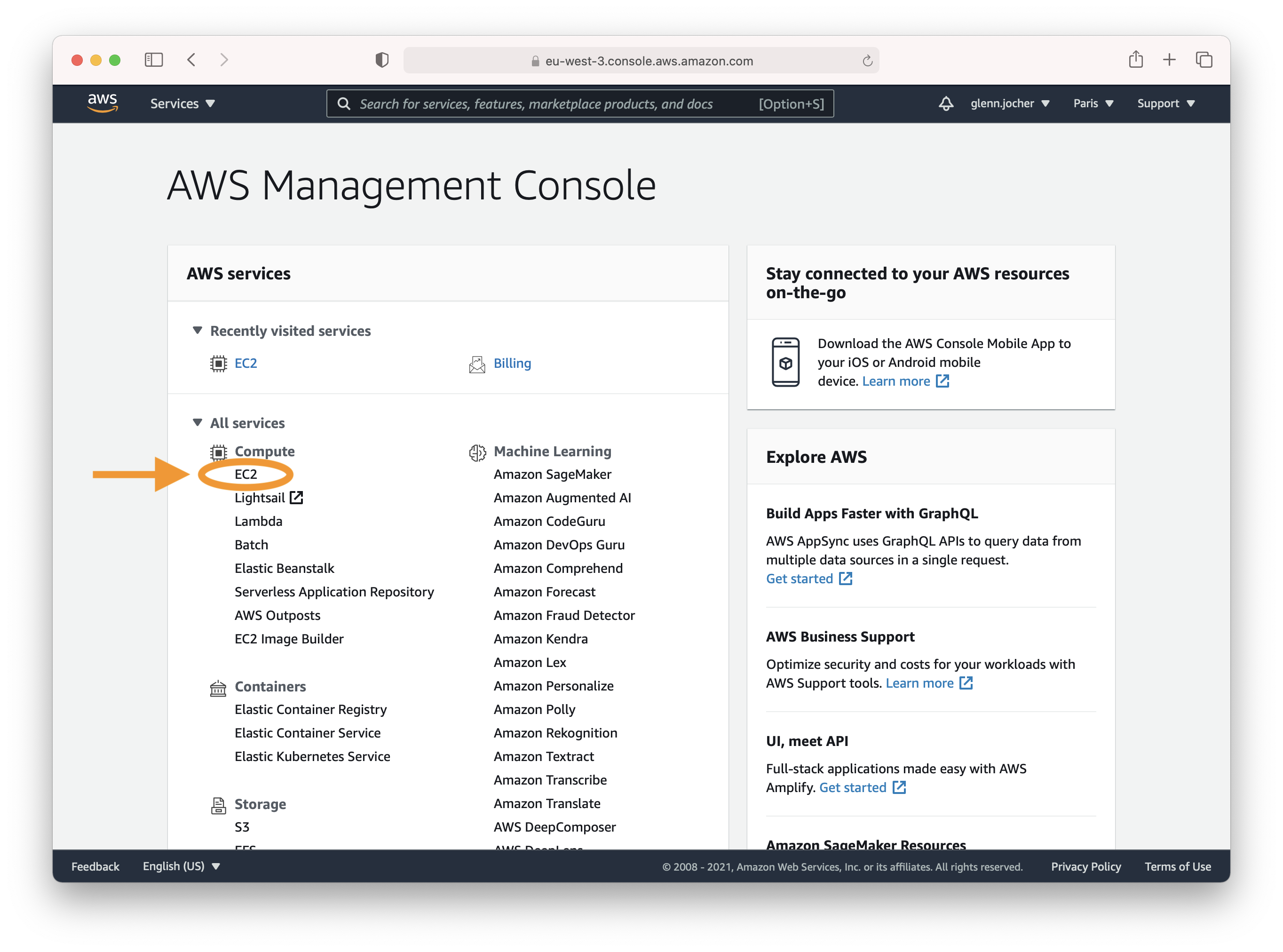

Create an account or sign in to the AWS console at [https://aws.amazon.com/console/](https://aws.amazon.com/console/) and select the **EC2** service.

|

||||

|

||||

|

||||

|

||||

## 2. Launch Instance

|

||||

|

||||

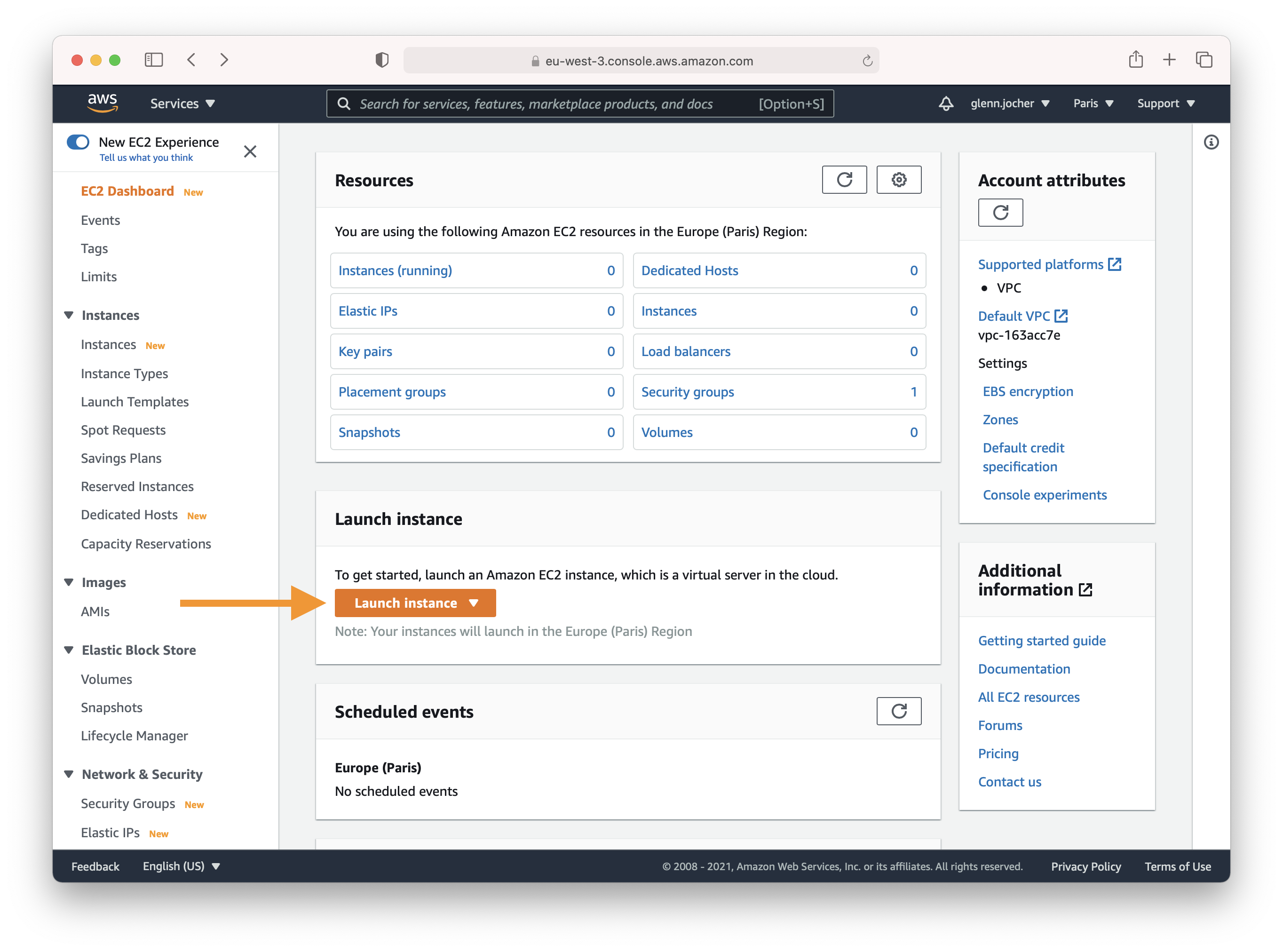

In the EC2 section of the AWS console, click the **Launch instance** button.

|

||||

|

||||

|

||||

|

||||

### Choose an Amazon Machine Image (AMI)

|

||||

|

||||

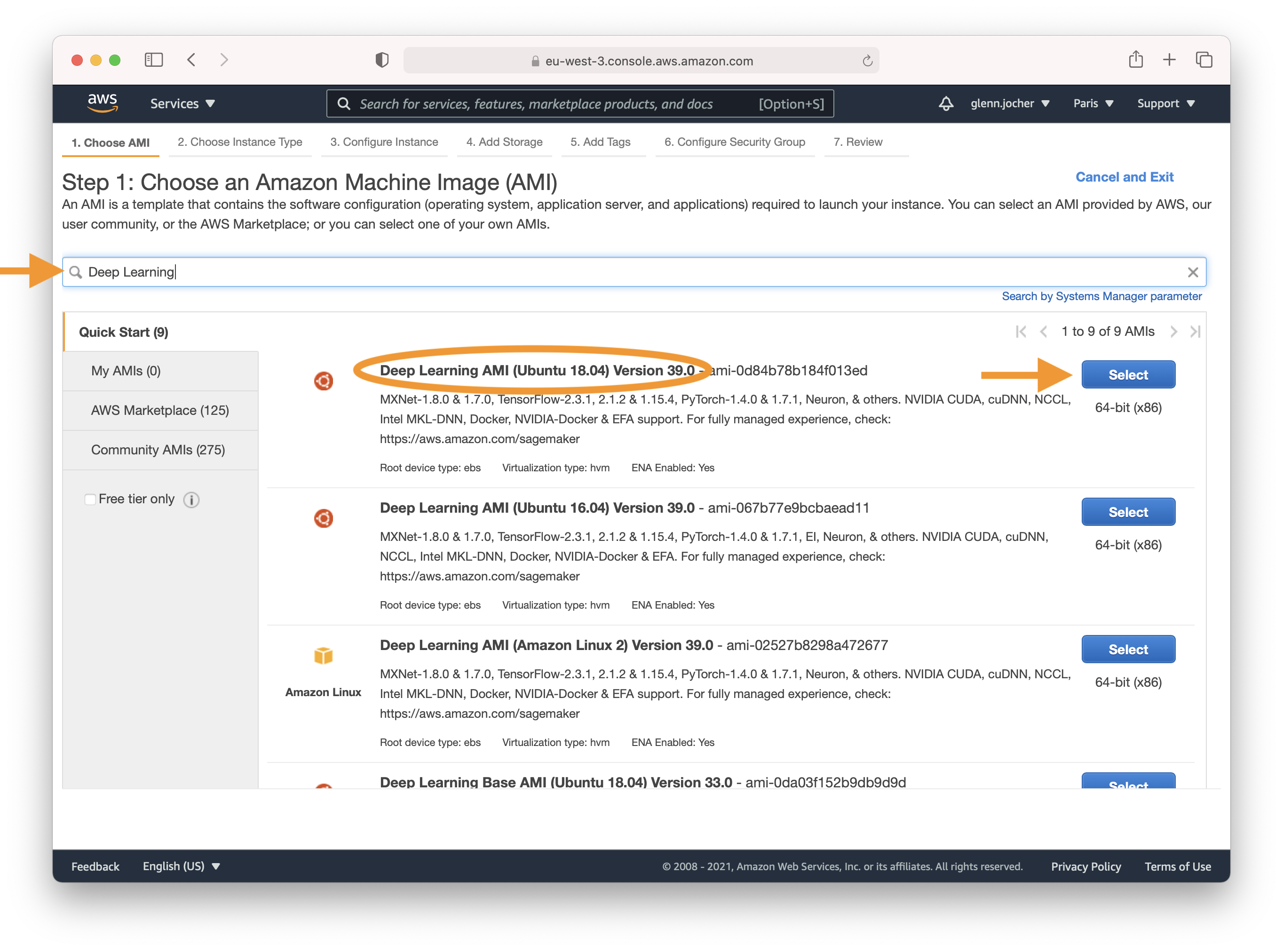

Enter 'Deep Learning' in the search field and select the most recent Ubuntu Deep Learning AMI (recommended), or an alternative Deep Learning AMI. For more information on selecting an AMI, see [Choosing Your DLAMI](https://docs.aws.amazon.com/dlami/latest/devguide/options.html).

|

||||

|

||||

|

||||

|

||||

### Select an Instance Type

|

||||

|

||||

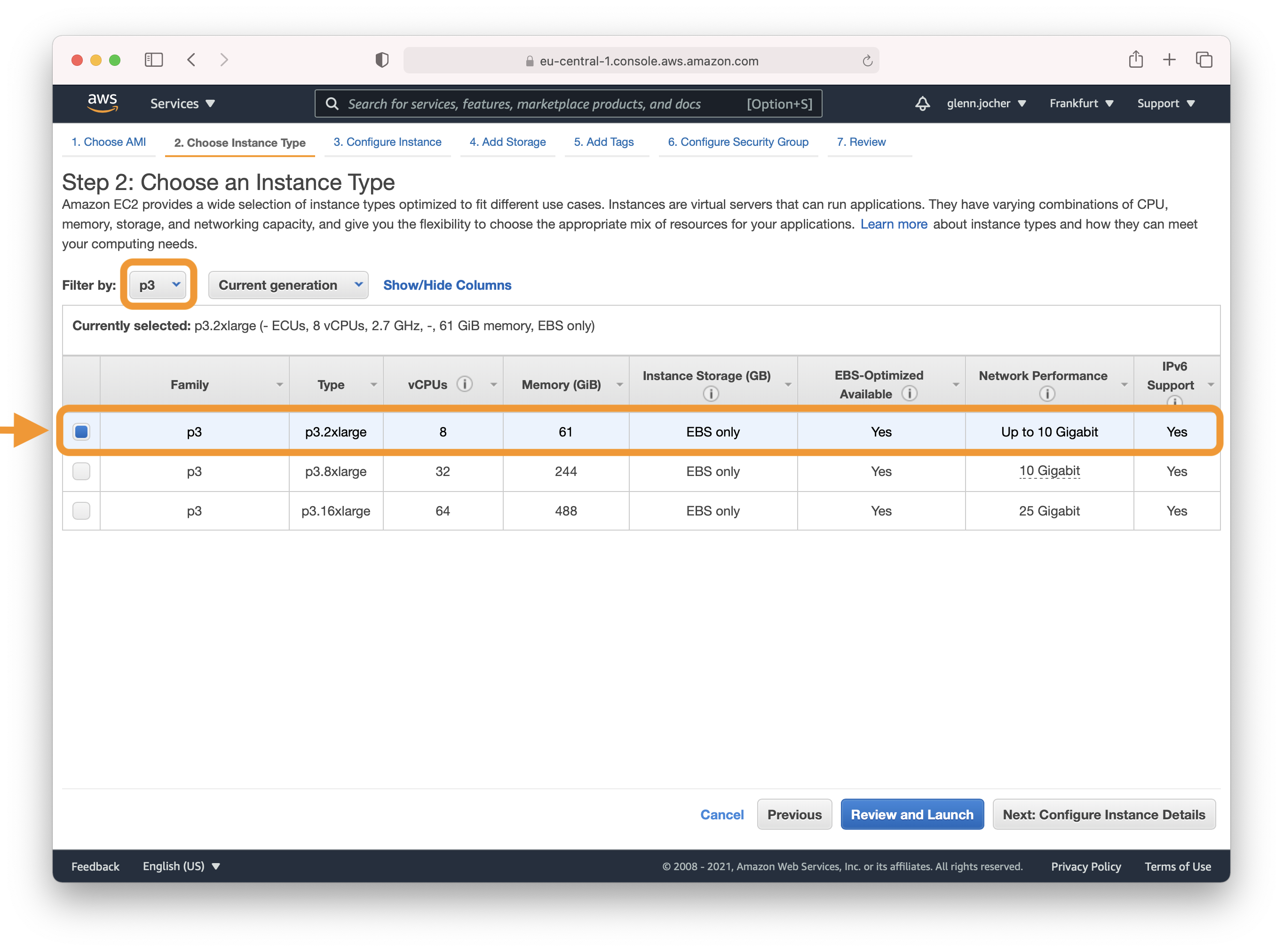

A GPU instance is recommended for most deep learning purposes. Training new models will be faster on a GPU instance than a CPU instance. Multi-GPU instances or distributed training across multiple instances with GPUs can offer sub-linear scaling. To set up distributed training, see [Distributed Training](https://docs.aws.amazon.com/dlami/latest/devguide/distributed-training.html).

|

||||

|

||||

**Note:** The size of your model should be a factor in selecting an instance. If your model exceeds an instance's available RAM, select a different instance type with enough memory for your application.

|

||||

|

||||

Refer to [EC2 Instance Types](https://aws.amazon.com/ec2/instance-types/) and choose Accelerated Computing to see the different GPU instance options.

|

||||

|

||||

|

||||

|

||||

For more information on GPU monitoring and optimization, see [GPU Monitoring and Optimization](https://docs.aws.amazon.com/dlami/latest/devguide/tutorial-gpu.html). For pricing, see [On-Demand Pricing](https://aws.amazon.com/ec2/pricing/on-demand/) and [Spot Pricing](https://aws.amazon.com/ec2/spot/pricing/).

|

||||

|

||||

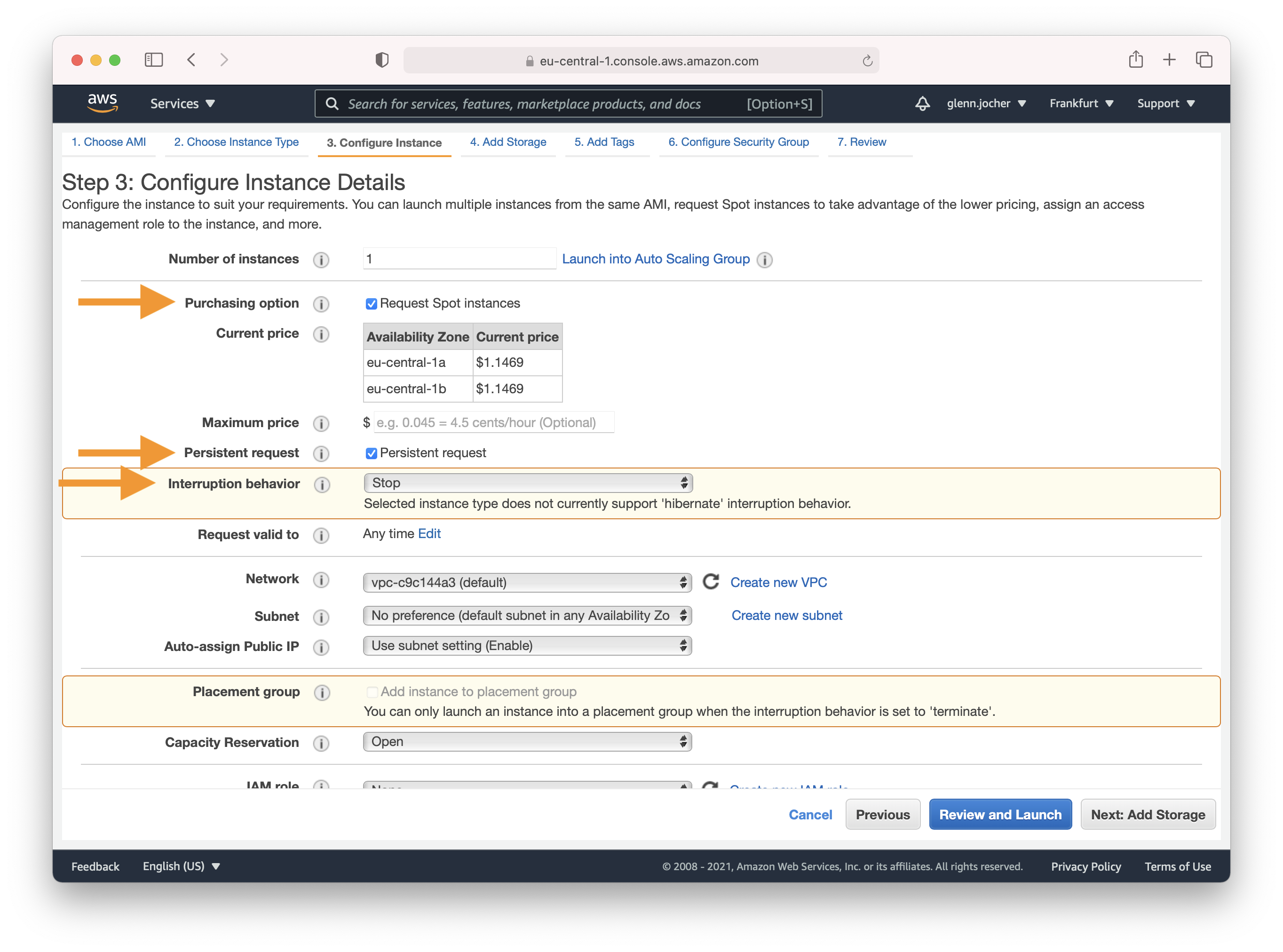

### Configure Instance Details

|

||||

|

||||

Amazon EC2 Spot Instances let you take advantage of unused EC2 capacity in the AWS cloud. Spot Instances are available at up to a 70% discount compared to On-Demand prices. We recommend a persistent spot instance, which will save your data and restart automatically when spot instance availability returns after spot instance termination. For full-price On-Demand instances, leave these settings at their default values.

|

||||

|

||||

|

||||

|

||||

Complete Steps 4-7 to finalize your instance hardware and security settings, and then launch the instance.

|

||||

|

||||

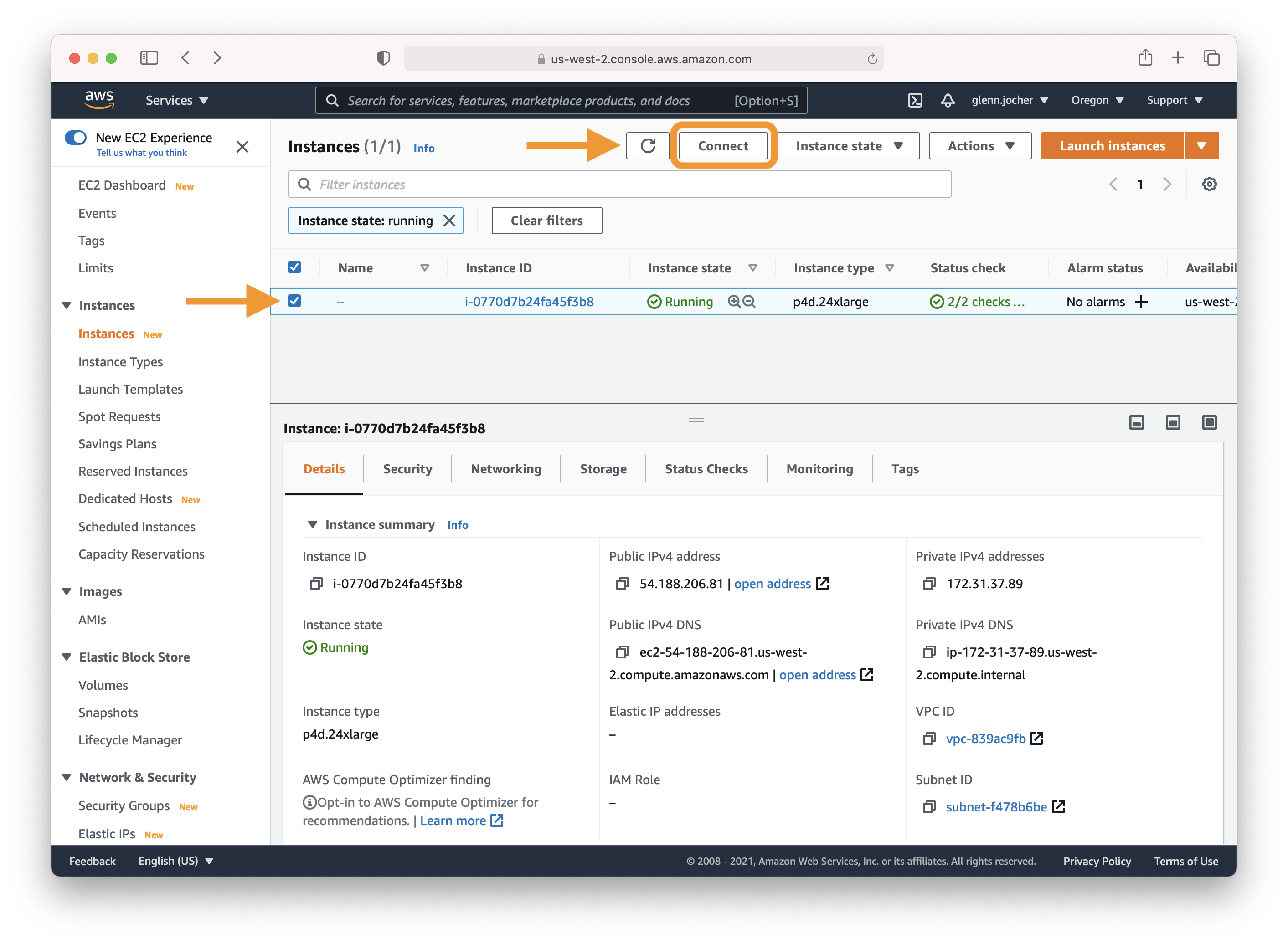

## 3. Connect to Instance

|

||||

|

||||

Select the checkbox next to your running instance, and then click Connect. Copy and paste the SSH terminal command into a terminal of your choice to connect to your instance.

|

||||

|

||||

|

||||

|

||||

## 4. Run YOLOv5

|

||||

|

||||

Once you have logged in to your instance, clone the repository and install the dependencies in a [**Python>=3.7.0**](https://www.python.org/) environment, including [**PyTorch>=1.7**](https://pytorch.org/get-started/locally/). [Models](https://github.com/ultralytics/yolov5/tree/master/models) and [datasets](https://github.com/ultralytics/yolov5/tree/master/data) download automatically from the latest YOLOv5 [release](https://github.com/ultralytics/yolov5/releases).

|

||||

|

||||

```bash

|

||||

git clone https://github.com/ultralytics/yolov5 # clone

|

||||

cd yolov5

|

||||

pip install -r requirements.txt # install

|

||||

```

|

||||

|

||||

Then, start training, testing, detecting, and exporting YOLOv5 models:

|

||||

|

||||

```bash

|

||||

python train.py # train a model

|

||||

python val.py --weights yolov5s.pt # validate a model for Precision, Recall, and mAP

|

||||

python detect.py --weights yolov5s.pt --source path/to/images # run inference on images and videos

|

||||

python export.py --weights yolov5s.pt --include onnx coreml tflite # export models to other formats

|

||||

```

|

||||

|

||||

## Optional Extras

|

||||

|

||||

Add 64GB of swap memory (to `--cache` large datasets):

|

||||

|

||||

```bash

|

||||

sudo fallocate -l 64G /swapfile

|

||||

sudo chmod 600 /swapfile

|

||||

sudo mkswap /swapfile

|

||||

sudo swapon /swapfile

|

||||

free -h # check memory

|

||||

```

|

||||

|

||||

Now you have successfully set up and run YOLOv5 on an AWS Deep Learning instance. Enjoy training, testing, and deploying your object detection models!

|

||||

58

docs/yolov5/environments/docker_image_quickstart_tutorial.md

Normal file

58

docs/yolov5/environments/docker_image_quickstart_tutorial.md

Normal file

@ -0,0 +1,58 @@

|

||||

# Get Started with YOLOv5 🚀 in Docker

|

||||

|

||||

This tutorial will guide you through the process of setting up and running YOLOv5 in a Docker container.

|

||||

|

||||

You can also explore other quickstart options for YOLOv5, such as our [Colab Notebook](https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb) <a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"></a> <a href="https://www.kaggle.com/ultralytics/yolov5"><img src="https://kaggle.com/static/images/open-in-kaggle.svg" alt="Open In Kaggle"></a>, [GCP Deep Learning VM](https://docs.ultralytics.com/yolov5/environments/google_cloud_quickstart_tutorial), and [Amazon AWS](https://docs.ultralytics.com/yolov5/environments/aws_quickstart_tutorial). *Updated: 21 April 2023*.

|

||||

|

||||

## Prerequisites

|

||||

|

||||

1. **Nvidia Driver**: Version 455.23 or higher. Download from [Nvidia's website](https://www.nvidia.com/Download/index.aspx).

|

||||

2. **Nvidia-Docker**: Allows Docker to interact with your local GPU. Installation instructions are available on the [Nvidia-Docker GitHub repository](https://github.com/NVIDIA/nvidia-docker).

|

||||

3. **Docker Engine - CE**: Version 19.03 or higher. Download and installation instructions can be found on the [Docker website](https://docs.docker.com/install/).

|

||||

|

||||

## Step 1: Pull the YOLOv5 Docker Image

|

||||

|

||||

The Ultralytics YOLOv5 DockerHub repository is available at [https://hub.docker.com/r/ultralytics/yolov5](https://hub.docker.com/r/ultralytics/yolov5). Docker Autobuild ensures that the `ultralytics/yolov5:latest` image is always in sync with the most recent repository commit. To pull the latest image, run the following command:

|

||||

|

||||

```bash

|

||||

sudo docker pull ultralytics/yolov5:latest

|

||||

```

|

||||

|

||||

## Step 2: Run the Docker Container

|

||||

|

||||

### Basic container:

|

||||

|

||||

Run an interactive instance of the YOLOv5 Docker image (called a "container") using the `-it` flag:

|

||||

|

||||

```bash

|

||||

sudo docker run --ipc=host -it ultralytics/yolov5:latest

|

||||

```

|

||||

|

||||

### Container with local file access:

|

||||

|

||||

To run a container with access to local files (e.g., COCO training data in `/datasets`), use the `-v` flag:

|

||||

|

||||

```bash

|

||||

sudo docker run --ipc=host -it -v "$(pwd)"/datasets:/usr/src/datasets ultralytics/yolov5:latest

|

||||

```

|

||||

|

||||

### Container with GPU access:

|

||||

|

||||

To run a container with GPU access, use the `--gpus all` flag:

|

||||

|

||||

```bash

|

||||

sudo docker run --ipc=host -it --gpus all ultralytics/yolov5:latest

|

||||

```

|

||||

|

||||

## Step 3: Use YOLOv5 🚀 within the Docker Container

|

||||

|

||||

Now you can train, test, detect, and export YOLOv5 models within the running Docker container:

|

||||

|

||||

```bash

|

||||

python train.py # train a model

|

||||

python val.py --weights yolov5s.pt # validate a model for Precision, Recall, and mAP

|

||||

python detect.py --weights yolov5s.pt --source path/to/images # run inference on images and videos

|

||||

python export.py --weights yolov5s.pt --include onnx coreml tflite # export models to other formats

|

||||

```

|

||||

|

||||

<p align="center"><img width="1000" src="https://user-images.githubusercontent.com/26833433/142224770-6e57caaf-ac01-4719-987f-c37d1b6f401f.png"></p>

|

||||

43

docs/yolov5/environments/google_cloud_quickstart_tutorial.md

Normal file

43

docs/yolov5/environments/google_cloud_quickstart_tutorial.md

Normal file

@ -0,0 +1,43 @@

|

||||

# Run YOLOv5 🚀 on Google Cloud Platform (GCP) Deep Learning Virtual Machine (VM) ⭐

|

||||

|

||||

This tutorial will guide you through the process of setting up and running YOLOv5 on a GCP Deep Learning VM. New GCP users are eligible for a [$300 free credit offer](https://cloud.google.com/free/docs/gcp-free-tier#free-trial).

|

||||

|

||||

You can also explore other quickstart options for YOLOv5, such as our [Colab Notebook](https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb) <a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"></a> <a href="https://www.kaggle.com/ultralytics/yolov5"><img src="https://kaggle.com/static/images/open-in-kaggle.svg" alt="Open In Kaggle"></a>, [Amazon AWS](https://docs.ultralytics.com/yolov5/environments/aws_quickstart_tutorial) and our Docker image at [Docker Hub](https://hub.docker.com/r/ultralytics/yolov5) <a href="https://hub.docker.com/r/ultralytics/yolov5"><img src="https://img.shields.io/docker/pulls/ultralytics/yolov5?logo=docker" alt="Docker Pulls"></a>. *Updated: 21 April 2023*.

|

||||

|

||||

**Last Updated**: 6 May 2022

|

||||

|

||||

## Step 1: Create a Deep Learning VM

|

||||

|

||||

1. Go to the [GCP marketplace](https://console.cloud.google.com/marketplace/details/click-to-deploy-images/deeplearning) and select a **Deep Learning VM**.

|

||||

2. Choose an **n1-standard-8** instance (with 8 vCPUs and 30 GB memory).

|

||||

3. Add a GPU of your choice.

|

||||

4. Check 'Install NVIDIA GPU driver automatically on first startup?'

|

||||

5. Select a 300 GB SSD Persistent Disk for sufficient I/O speed.

|

||||

6. Click 'Deploy'.

|

||||

|

||||

The preinstalled [Anaconda](https://docs.anaconda.com/anaconda/packages/pkg-docs/) Python environment includes all dependencies.

|

||||

|

||||

<img width="1000" alt="GCP Marketplace" src="https://user-images.githubusercontent.com/26833433/105811495-95863880-5f61-11eb-841d-c2f2a5aa0ffe.png">

|

||||

|

||||

## Step 2: Set Up the VM

|

||||

|

||||

Clone the YOLOv5 repository and install the [requirements.txt](https://github.com/ultralytics/yolov5/blob/master/requirements.txt) in a [**Python>=3.7.0**](https://www.python.org/) environment, including [**PyTorch>=1.7**](https://pytorch.org/get-started/locally/). [Models](https://github.com/ultralytics/yolov5/tree/master/models) and [datasets](https://github.com/ultralytics/yolov5/tree/master/data) will be downloaded automatically from the latest YOLOv5 [release](https://github.com/ultralytics/yolov5/releases).

|

||||

|

||||

```bash

|

||||

git clone https://github.com/ultralytics/yolov5 # clone

|

||||

cd yolov5

|

||||

pip install -r requirements.txt # install

|

||||

```

|

||||

|

||||

## Step 3: Run YOLOv5 🚀 on the VM

|

||||

|

||||

You can now train, test, detect, and export YOLOv5 models on your VM:

|

||||

|

||||

```bash

|

||||

python train.py # train a model

|

||||

python val.py --weights yolov5s.pt # validate a model for Precision, Recall, and mAP

|

||||

python detect.py --weights yolov5s.pt --source path/to/images # run inference on images and videos

|

||||

python export.py --weights yolov5s.pt --include onnx coreml tflite # export models to other formats

|

||||

```

|

||||

|

||||

<img width="1000" alt="GCP terminal" src="https://user-images.githubusercontent.com/26833433/142223900-275e5c9e-e2b5-43f7-a21c-35c4ca7de87c.png">

|

||||

@ -24,22 +24,22 @@ This powerful deep learning framework is built on the PyTorch platform and has g

|

||||

|

||||

## Tutorials

|

||||

|

||||

* [Train Custom Data](train_custom_data.md) 🚀 RECOMMENDED

|

||||

* [Tips for Best Training Results](tips_for_best_training_results.md) ☘️

|

||||

* [Multi-GPU Training](multi_gpu_training.md)

|

||||

* [PyTorch Hub](pytorch_hub.md) 🌟 NEW

|

||||

* [TFLite, ONNX, CoreML, TensorRT Export](export.md) 🚀

|

||||

* [NVIDIA Jetson platform Deployment](jetson_nano.md) 🌟 NEW

|

||||

* [Test-Time Augmentation (TTA)](tta.md)

|

||||

* [Model Ensembling](ensemble.md)

|

||||

* [Model Pruning/Sparsity](pruning_sparsity.md)

|

||||

* [Hyperparameter Evolution](hyp_evolution.md)

|

||||

* [Transfer Learning with Frozen Layers](transfer_learn_frozen.md)

|

||||

* [Architecture Summary](architecture.md) 🌟 NEW

|

||||

* [Roboflow for Datasets, Labeling, and Active Learning](roboflow.md)

|

||||

* [ClearML Logging](clearml.md) 🌟 NEW

|

||||

* [YOLOv5 with Neural Magic's Deepsparse](neural_magic.md) 🌟 NEW

|

||||

* [Comet Logging](comet.md) 🌟 NEW

|

||||

* [Train Custom Data](tutorials/train_custom_data.md) 🚀 RECOMMENDED

|

||||

* [Tips for Best Training Results](tutorials/tips_for_best_training_results.md) ☘️

|

||||

* [Multi-GPU Training](tutorials/multi_gpu_training.md)

|

||||

* [PyTorch Hub](tutorials/pytorch_hub_model_loading.md) 🌟 NEW

|

||||

* [TFLite, ONNX, CoreML, TensorRT Export](tutorials/model_export.md) 🚀

|

||||

* [NVIDIA Jetson platform Deployment](tutorials/running_on_jetson_nano.md) 🌟 NEW

|

||||

* [Test-Time Augmentation (TTA)](tutorials/test_time_augmentation.md)

|

||||

* [Model Ensembling](tutorials/model_ensembling.md)

|

||||

* [Model Pruning/Sparsity](tutorials/model_pruning_and_sparsity.md)

|

||||

* [Hyperparameter Evolution](tutorials/hyperparameter_evolution.md)

|

||||

* [Transfer Learning with Frozen Layers](tutorials/transfer_learning_with_frozen_layers.md)

|

||||

* [Architecture Summary](tutorials/architecture_description.md) 🌟 NEW

|

||||

* [Roboflow for Datasets, Labeling, and Active Learning](tutorials/roboflow_datasets_integration.md)

|

||||

* [ClearML Logging](tutorials/clearml_logging_integration.md) 🌟 NEW

|

||||

* [YOLOv5 with Neural Magic's Deepsparse](tutorials/neural_magic_pruning_quantization.md) 🌟 NEW

|

||||

* [Comet Logging](tutorials/comet_logging_integration.md) 🌟 NEW

|

||||

|

||||

## Environments

|

||||

|

||||

@ -50,10 +50,10 @@ and [PyTorch](https://pytorch.org/) preinstalled):

|

||||

- **Notebooks** with free

|

||||

GPU: <a href="https://bit.ly/yolov5-paperspace-notebook"><img src="https://assets.paperspace.io/img/gradient-badge.svg" alt="Run on Gradient"></a> <a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"></a> <a href="https://www.kaggle.com/ultralytics/yolov5"><img src="https://kaggle.com/static/images/open-in-kaggle.svg" alt="Open In Kaggle"></a>

|

||||

- **Google Cloud** Deep Learning VM.

|

||||

See [GCP Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/GCP-Quickstart)

|

||||

- **Amazon** Deep Learning AMI. See [AWS Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/AWS-Quickstart)

|

||||

See [GCP Quickstart Guide](environments/google_cloud_quickstart_tutorial.md)

|

||||

- **Amazon** Deep Learning AMI. See [AWS Quickstart Guide](environments/aws_quickstart_tutorial.md)

|

||||

- **Docker Image**.

|

||||

See [Docker Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/Docker-Quickstart) <a href="https://hub.docker.com/r/ultralytics/yolov5"><img src="https://img.shields.io/docker/pulls/ultralytics/yolov5?logo=docker" alt="Docker Pulls"></a>

|

||||

See [Docker Quickstart Guide](environments/docker_image_quickstart_tutorial.md) <a href="https://hub.docker.com/r/ultralytics/yolov5"><img src="https://img.shields.io/docker/pulls/ultralytics/yolov5?logo=docker" alt="Docker Pulls"></a>

|

||||

|

||||

## Status

|

||||

|

||||

|

||||

76

docs/yolov5/quickstart_tutorial.md

Normal file

76

docs/yolov5/quickstart_tutorial.md

Normal file

@ -0,0 +1,76 @@

|

||||

# YOLOv5 Quickstart

|

||||

|

||||

See below for quickstart examples.

|

||||

|

||||

## Install

|

||||

|

||||

Clone repo and install [requirements.txt](https://github.com/ultralytics/yolov5/blob/master/requirements.txt) in a

|

||||

[**Python>=3.7.0**](https://www.python.org/) environment, including

|

||||

[**PyTorch>=1.7**](https://pytorch.org/get-started/locally/).

|

||||

|

||||

```bash

|

||||

git clone https://github.com/ultralytics/yolov5 # clone

|

||||

cd yolov5

|

||||

pip install -r requirements.txt # install

|

||||

```

|

||||

|

||||

|

||||

|

||||

## Inference

|

||||

|

||||

YOLOv5 [PyTorch Hub](https://github.com/ultralytics/yolov5/issues/36) inference. [Models](https://github.com/ultralytics/yolov5/tree/master/models) download automatically from the latest

|

||||

YOLOv5 [release](https://github.com/ultralytics/yolov5/releases).

|

||||

|

||||

```python

|

||||

import torch

|

||||

|

||||

# Model

|

||||

model = torch.hub.load("ultralytics/yolov5", "yolov5s") # or yolov5n - yolov5x6, custom

|

||||

|

||||

# Images

|

||||

img = "https://ultralytics.com/images/zidane.jpg" # or file, Path, PIL, OpenCV, numpy, list

|

||||

|

||||

# Inference

|

||||

results = model(img)

|

||||

|

||||

# Results

|

||||

results.print() # or .show(), .save(), .crop(), .pandas(), etc.

|

||||

```

|

||||

|

||||

## Inference with detect.py

|

||||

|

||||

`detect.py` runs inference on a variety of sources, downloading [models](https://github.com/ultralytics/yolov5/tree/master/models) automatically from

|

||||

the latest YOLOv5 [release](https://github.com/ultralytics/yolov5/releases) and saving results to `runs/detect`.

|

||||

|

||||

```bash

|

||||

python detect.py --weights yolov5s.pt --source 0 # webcam

|

||||

img.jpg # image

|

||||

vid.mp4 # video

|

||||

screen # screenshot

|

||||

path/ # directory

|

||||

list.txt # list of images

|

||||

list.streams # list of streams

|

||||

'path/*.jpg' # glob

|

||||

'https://youtu.be/Zgi9g1ksQHc' # YouTube

|

||||

'rtsp://example.com/media.mp4' # RTSP, RTMP, HTTP stream

|

||||

```

|

||||

|

||||

## Training

|

||||

|

||||

The commands below reproduce YOLOv5 [COCO](https://github.com/ultralytics/yolov5/blob/master/data/scripts/get_coco.sh)

|

||||

results. [Models](https://github.com/ultralytics/yolov5/tree/master/models)

|

||||

and [datasets](https://github.com/ultralytics/yolov5/tree/master/data) download automatically from the latest

|

||||

YOLOv5 [release](https://github.com/ultralytics/yolov5/releases). Training times for YOLOv5n/s/m/l/x are

|

||||

1/2/4/6/8 days on a V100 GPU ([Multi-GPU](https://github.com/ultralytics/yolov5/issues/475) times faster). Use the

|

||||

largest `--batch-size` possible, or pass `--batch-size -1` for

|

||||

YOLOv5 [AutoBatch](https://github.com/ultralytics/yolov5/pull/5092). Batch sizes shown for V100-16GB.

|

||||

|

||||

```bash

|

||||

python train.py --data coco.yaml --epochs 300 --weights '' --cfg yolov5n.yaml --batch-size 128

|

||||

yolov5s 64

|

||||

yolov5m 40

|

||||

yolov5l 24

|

||||

yolov5x 16

|

||||

```

|

||||

|

||||

<img width="800" src="https://user-images.githubusercontent.com/26833433/90222759-949d8800-ddc1-11ea-9fa1-1c97eed2b963.png">

|

||||

@ -191,19 +191,3 @@ Match positive samples:

|

||||

- Because the center point offset range is adjusted from (0, 1) to (-0.5, 1.5). GT Box can be assigned to more anchors.

|

||||

|

||||

<img src="https://user-images.githubusercontent.com/31005897/158508139-9db4e8c2-cf96-47e0-bc80-35d11512f296.png#pic_center" width=70%>

|

||||

|

||||

## Environments

|

||||

|

||||

YOLOv5 may be run in any of the following up-to-date verified environments (with all dependencies including [CUDA](https://developer.nvidia.com/cuda)/[CUDNN](https://developer.nvidia.com/cudnn), [Python](https://www.python.org/) and [PyTorch](https://pytorch.org/) preinstalled):

|

||||

|

||||

- **Notebooks** with free GPU: <a href="https://bit.ly/yolov5-paperspace-notebook"><img src="https://assets.paperspace.io/img/gradient-badge.svg" alt="Run on Gradient"></a> <a href="https://colab.research.google.com/github/ultralytics/yolov5/blob/master/tutorial.ipynb"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"></a> <a href="https://www.kaggle.com/ultralytics/yolov5"><img src="https://kaggle.com/static/images/open-in-kaggle.svg" alt="Open In Kaggle"></a>

|

||||

- **Google Cloud** Deep Learning VM. See [GCP Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/GCP-Quickstart)

|

||||

- **Amazon** Deep Learning AMI. See [AWS Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/AWS-Quickstart)

|

||||

- **Docker Image**. See [Docker Quickstart Guide](https://github.com/ultralytics/yolov5/wiki/Docker-Quickstart) <a href="https://hub.docker.com/r/ultralytics/yolov5"><img src="https://img.shields.io/docker/pulls/ultralytics/yolov5?logo=docker" alt="Docker Pulls"></a>

|

||||

|

||||

|

||||

## Status

|

||||

|

||||

<a href="https://github.com/ultralytics/yolov5/actions/workflows/ci-testing.yml"><img src="https://github.com/ultralytics/yolov5/actions/workflows/ci-testing.yml/badge.svg" alt="YOLOv5 CI"></a>

|

||||

|

||||

If this badge is green, all [YOLOv5 GitHub Actions](https://github.com/ultralytics/yolov5/actions) Continuous Integration (CI) tests are currently passing. CI tests verify correct operation of YOLOv5 [training](https://github.com/ultralytics/yolov5/blob/master/train.py), [validation](https://github.com/ultralytics/yolov5/blob/master/val.py), [inference](https://github.com/ultralytics/yolov5/blob/master/detect.py), [export](https://github.com/ultralytics/yolov5/blob/master/export.py) and [benchmarks](https://github.com/ultralytics/yolov5/blob/master/benchmarks.py) on macOS, Windows, and Ubuntu every 24 hours and on every commit.

|

||||

Reference in New Issue

Block a user